Nvidia Hopper GPU Architecture Announced, the “Next-Gen of Accelerated Computing”

Nvidia dropped some new technological advancements for computing today, as the company announcd the arrival of the Nvidia Hopper GPU architecture, a newe platform that the company calls the “next-gen of accelerated computing.” The Hopper is the official successor to the Ampere series, with this new platform expected to deliver a phenomenal performance leap over its predecessor.

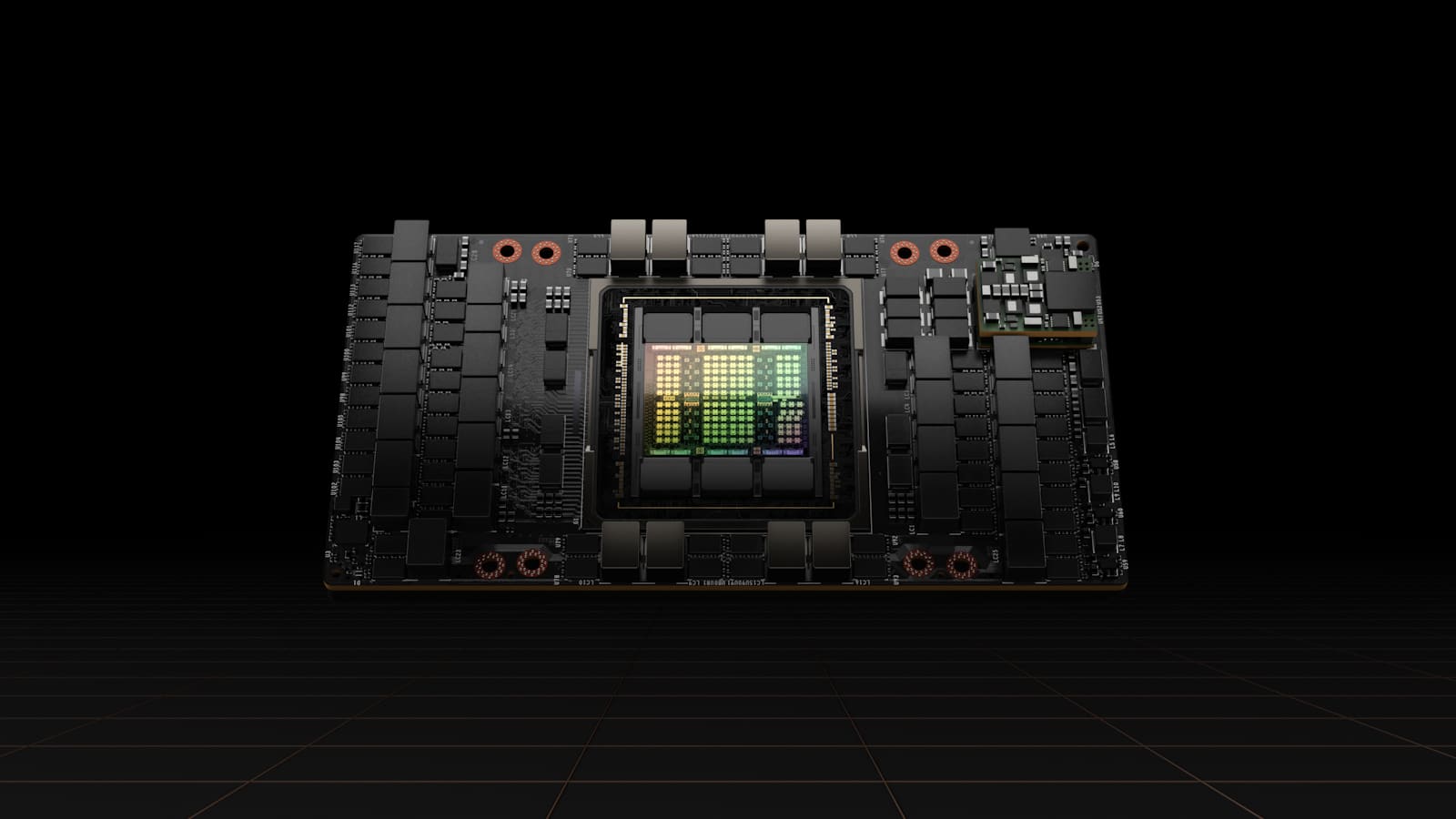

Building on this new platform is the H100, the first GPU built on the Hopper architecture. Here are some of the features of this new card:

- World’s Most Advanced Chip — Built with 80 billion transistors using a cutting-edge TSMC 4N process designed for NVIDIA’s accelerated compute needs, H100 features major advances to accelerate AI, HPC, memory bandwidth, interconnect and communication, including nearly 5 terabytes per second of external connectivity. H100 is the first GPU to support PCIe Gen5 and the first to utilize HBM3, enabling 3TB/s of memory bandwidth. Twenty H100 GPUs can sustain the equivalent of the entire world’s internet traffic, making it possible for customers to deliver advanced recommender systems and large language models running inference on data in real time.

- New Transformer Engine — Now the standard model choice for natural language processing, the Transformer is one of the most important deep learning models ever invented. The H100 accelerator’s Transformer Engine is built to speed up these networks as much as 6x versus the previous generation without losing accuracy.

- 2nd-Generation Secure Multi-Instance GPU — MIG technology allows a single GPU to be partitioned into seven smaller, fully isolated instances to handle different types of jobs. The Hopper architecture extends MIG capabilities by up to 7x over the previous generation by offering secure multitenant configurations in cloud environments across each GPU instance.

- Confidential Computing — H100 is the world’s first accelerator with confidential computing capabilities to protect AI models and customer data while they are being processed. Customers can also apply confidential computing to federated learning for privacy-sensitive industries like healthcare and financial services, as well as on shared cloud infrastructures.

- 4th-Generation NVIDIA NVLink — To accelerate the largest AI models, NVLink combines with a new external NVLink Switch to extend NVLink as a scale-up network beyond the server, connecting up to 256 H100 GPUs at 9x higher bandwidth versus the previous generation using NVIDIA HDR Quantum InfiniBand.

- DPX Instructions — New DPX instructions accelerate dynamic programming — used in a broad range of algorithms, including route optimization and genomics — by up to 40x compared with CPUs and up to 7x compared with previous-generation GPUs. This includes the Floyd-Warshall algorithm to find optimal routes for autonomous robot fleets in dynamic warehouse environments, and the Smith-Waterman algorithm used in sequence alignment for DNA and protein classification and folding.

H100 has already seen widespread support across the industry utilizing cloud tech, including Alibaba Cloud, Amazon Web Services, Baidu AI Cloud, Google Cloud, Microsoft Azure, Oracle Cloud and Tencent Cloud, which plan to offer H100-based instances. We can’t wait to see the Nvidia Hopper GPU architecture applications for gaming as well, as this is expected to take development to new heights.

Source: Nvidia

Stay connected to MP1st and the latest news by following us on Bluesky, X, Facebook, TikTok, YouTube, and Google News.